Cache Logic / Operations¶

When an object in cache (or potentiallyin cache) is active in a transaction, it is tracked by an

ODE, which is an instance of OpenDirEntry 1. For any cache oject the first operation is

always a read.

Read¶

Reading starts with Cache::open_read. This attempts to get the stripe lock and then probes

the open directory entries and the stripe directory. A CacheVC instance is created if

either the lock is missed or there is a hit in the hit. The other case indicates a valid

determination that the read is a a miss.

The next step depends on the result. If the lock was missed CacheVC::stateProbe is next,

which waits for the stripe lock and then does the cache probe and goes on to the same as

Cache::open_read. If a OpenDirEntry was found it is checked for being valid. If

not, or a OpenDirEntry wasn’t found then one is created and the CacheVC goes to

the CacheVC::stateReadFirstDoc which loads the first doc fragment from disk. If another

CacheVC instance is already reading from disk then the CacheVC waits for the read

to complete.

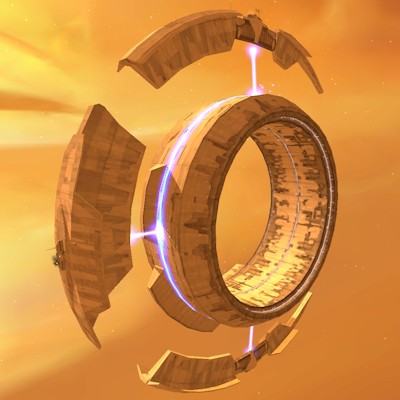

Cache Startup¶

State machine.

![digraph cache {

node

[shape=Mrecord width=1.5];

subgraph cluster_Cache {

label="Cache";

//

// States (Nodes)

//

"Cache::idle"

[label="{idle}"];

"Cache::Finish"

[label="{Finish}"];

"Cache::open_write"

[label="{open_write}"];

"%start"

[label="" shape=circle style=filled fillcolor=black width=0.25];

}

subgraph cluster_Vol {

label="Vol";

//

// States (Nodes)

//

}

subgraph cluster_OpenDirEntry {

label="OpenDirEntry";

//

// States (Nodes)

//

"push(OpenDirEntry::Default)"

[label="" shape=plaintext];

}

subgraph cluster_CacheVC {

label="CacheVC";

//

// States (Nodes)

//

"CacheVC::openWriteStartBegin"

[label="{openWriteStartBegin}"];

"CacheVC::dummy"

[label="{dummy}"];

"CacheVC::openWriteInit"

[label="{openWriteInit}"];

"CacheVC::openWriteOverwrite"

[label="{openWriteOverwrite}"];

"CacheVC::pop(LOCK_READY)"

[label="" width=1]

"CacheVC::%end"

[label="" shape=doublecircle style=filled fillcolor=black width=0.15];

"CacheVC::openWriteStartBegin::CacheVC"

[label="{CacheVC|O-O\r}"]

"CacheVC::openWriteInit::OpenDirEntry"

[label="{OpenDirEntry|O-O\r}"]

}

//

// Transitions (Edges)

//

"Cache::idle" -> "Cache::open_write"

[label="Cache::open_write/\l"];

"Cache::open_write" -> "CacheVC::openWriteStartBegin"

[label="lock_failed/\l"];

"Cache::open_write" -> "CacheVC::openWriteOverwrite"

[label="overwrite/\l"];

"Cache::open_write" -> "CacheVC::openWriteInit"

[label="write/\l"];

"%start" -> "Cache::idle"

"push(OpenDirEntry::Default)" -> "OpenDirEntry::Default"

[arrowtail=odot];

"CacheVC::openWriteStartBegin" -> "CacheVC::openWriteStartBegin::CacheVC"

[label="unlocked/\lpush(dummy)\l"];

"CacheVC::openWriteStartBegin" -> "CacheVC::dummy"

[label="dummy/\l"];

"CacheVC::openWriteStartBegin" -> "CacheVC::openWriteInit"

[label="LOCK_READY/\l"];

"CacheVC::dummy" -> "CacheVC::pop(LOCK_READY)"

[label="lock/\l"];

"CacheVC::openWriteInit" -> "CacheVC::openWriteInit::OpenDirEntry"

[label="unlocked/\lpush(OpenDirEntry::Default)\l"];

"CacheVC::pop(LOCK_READY)" -> "CacheVC::%end"

[label="pop(LOCK_READY);\l"];

"CacheVC::openWriteStartBegin::CacheVC" -> "CacheVC::openWriteStartBegin"

[label="pop/"]

"CacheVC::openWriteInit::OpenDirEntry" -> "CacheVC::openWriteInit"

[label="pop/"]

}](../_images/graphviz-abe1fb346cc194dcd816c610aa7973c26afae21a.png)

Lock Waiting¶

To avoid the current issues with excessive event flows when many CacheVC instances are

waiting for locks along with many failed lock attempts, the Vol and OpenDirEntry

maintain lock waits lists. These are organized differently due to the different natures and access

patterns for the classes. In both cases the wait lists are lists of Traffic Server events that refer to

CacheVC instances. This indirection is critical in order to avoid problems if a

CacheVC is destroyed while waiting for a lock. It can then cancel the event without leaving

dangerous dangling pointers. The lock owner can then discard canceled events without any further

dereferencing. This is the same logic used by the core event loop.

Vol uses thread local storage to store wait lists. For each thread there is a vector of

event lists. Each Vol has a numeric identifier which also serves as its index in the

vector. This is possible because the set of Vol instances is determined by the cache

configuration and therefore fixed at process start time. Content for Vol locks is, based on

experimental measurements, the lock with the highest contention and therefore is worth special

casing for performance. The locks are spread among threads for a few reasons.

- Load Distribution

The number of waiting

CacheVCinstances can be large and having them all dispatch in one pass could easily introduce excessive latency in to whichever event loop gets unlucky. Conversely breaking up thundering herds somewhat from the cache point of view is also beneficial.- Thread Consistency

Waiting

CacheVCinstances can be dispatched on their preferred thread. This is more important forVolinteraction as those are much close to the HTTP state machine which prefers to always run on a single thread.

OpenDirEntry uses a single global atomic list of events per instance. It is not feasible to

use thread local storage because instances of OpenDirEntry are created and destroyed

frequently and the corresponding number of instances fluctuates over a large range. The load

distribution issue is of lesser importance because of the (usually) much larger number of instances

which spreads the load when the instances become available. It is unfortunate this causes

CacheVC instances to dispatch on other threads but the OpenDirEntry interactions

tend to be less closely coupled to HTTP state machine instances.

Write Operations¶

Footnotes

- 1

Previously an ODE was opened only for a write operation. This was workable when only one write operation per cache object could be active at a time. The current architecture has a much more complex relationship between reading and writing cache objects and so requires tracking to start earlier. This is also necessary for collapsed forwarding so that multiple readers are detected before a write is started.